When I was Head of Paid Search in iProspect, I started noticing a pattern in interviews and within newcomers. I’d ask candidates — sometimes seniors with four or five years on the job — to walk me through how the Google Ads auction actually decides who shows up and who pays what. The answers were, almost without exception, vague. “Smart Bidding handles it.” “It’s based on Quality Score and bid.” “There’s a lot of AI or machine learning involved now.”

None of those answers were technically wrong, but they’re just shallow enough that the person giving them couldn’t reason about the exact mechanics that were happening under the hood.

This is the cost of the convenience Google has been selling for the past decade. Smart Bidding, Performance Max, AI Max — these tools work. They genuinely do. But they’ve abstracted the underlying mechanics so cleanly that an entire generation of paid search specialists has come up without ever needing to understand them. And then performance dips, and the diagnostic toolkit is empty.

This article is for those specialists or for those who need a reminder. New ones, senior ones, anyone whose mental model of the auction stops at “Smart Bidding handles it.” The auction is still a Generalised Second-Price mechanism. It still uses Quality Score. It still has thresholds you can’t bid through. The machine is just placing the bids for you — inside the same mathematical box that’s been there since 2002.

Table of Contents

The CPM Era and Why It Couldn’t Last (2000–2002)

When Google AdWords launched in October 2000, it had around 350 advertisers and operated on a CPM basis — cost per thousand impressions. You bought visibility, not clicks. Premium sponsorships were placed statically on results pages, completely disconnected from what the user had actually searched for.

That model was never going to survive contact with the medium. Search is, fundamentally, an intent signal. Charging per impression rewards advertisers for showing up, not for being relevant. The economic incentive was to write the most attention-grabbing ad you could, plaster it across as many queries as possible, and collect the impressions. The user experience suffered, the advertiser’s ROI suffered, and Google’s long-term position as the front door of the internet would have suffered too if they’d let it continue.

Meanwhile, Overture (formerly GoTo.com) had built a working alternative: pay-per-click on a pure first-price auction. Whoever bid the most got the top slot. It was simple, and it was already eating Google’s lunch in the small-business segment.

How Google Quietly Solved Search Advertising in 2002

In February 2002, Google launched AdWords Select with CPC pricing and minimum bids of $0.05. On the surface it looked like Google copying Overture. It wasn’t.

The change Google made was subtle and, in hindsight, one of the most consequential design decisions in the history of digital economics. Instead of ranking ads by bid alone, Google factored in historical click-through rate. The formula was:

Ad Rank = Maximum CPC × Historical CTR

Read that carefully. If Advertiser A bids $1.00 with a 1% CTR, Google expects to earn $0.01 per impression. If Advertiser B bids $0.50 with a 5% CTR, Google expects to earn $0.025 per impression. Under Google’s formula, Advertiser B wins — despite bidding half as much.

This single equation aligned three sets of incentives that had previously been in tension:

- The user gets results that match their intent (because relevant ads outrank irrelevant ones).

- The advertiser gets rewarded for relevance with cheaper traffic and better positioning.

- Google maximises expected revenue per impression.

Everything that came after — Quality Score, Ad Rank thresholds, Smart Bidding, AI Max — is built on this foundation. Google didn’t beat Overture by bidding more aggressively. They beat them by changing what “winning” the auction meant.

What Actually Happens in a Generalised Second-Price Auction

This is the part most specialists struggle to understand. It matters more than people think.

In a pure first-price auction (Overture’s original model), the winner pays exactly what they bid. That sounds clean, but it creates a horrible market dynamic: bid shading. Every advertiser runs scripts that shave their bid down by a fraction of a cent, looking for the minimum amount needed to hold position. The market becomes unstable, prices oscillate, and computational overhead explodes.

Google’s search auction uses a Generalised Second-Price (GSP) mechanism, derived from the Vickrey-Clarke-Groves model. Here’s what actually happens when a query fires:

- All eligible advertisers submit their max CPC bids.

- Google calculates each ad’s Ad Rank (more on the formula below).

- Ads are ordered by Ad Rank.

- The winner does not pay their max bid. They pay the minimum amount required to beat the Ad Rank of the advertiser directly below them, plus $0.01.

The implication: your max CPC is your maximum exposure, not your actual cost. You’re encouraged to bid your true valuation of a click, because bidding higher only changes what you’re willing to pay if pushed — not what you actually pay.

| Auction Type | Pricing Rule | Where Google Uses It |

|---|---|---|

| First-Price | Winner pays their bid | Google Ad Manager (display, programmatic) since 2019 |

| Second-Price (Vickrey) | Winner pays second-highest bid | Theoretical foundation |

| Generalised Second-Price | Winner pays the price needed to beat the Ad Rank below them, +$0.01 | Google Search auction, 2002 to today |

The First-Price Confusion (And Why It Doesn’t Apply to Search)

In 2019, Google Ad Manager — the platform handling display advertising and programmatic supply-side auctions — moved to a unified first-price model. This is true. It’s also where the confusion starts.

A lot of marketers, including some senior ones, took that change and assumed it applied to Google Ads search too. It does not. The Google Ads search auction has remained a modified GSP mechanism through 2024, 2025, and into 2026. Display is first-price. Search is still GSP. The fact that the same parent company runs both has muddied the waters, but the search auction your Smart Bidding strategy is competing in today is mathematically the same auction that ran in 2005.

If you’re using bid shading mental models for search, you’re solving the wrong problem.

Quality Score: The Compounding Discount Most People Underestimate

In mid-2005, Google replaced raw historical CTR with Quality Score: a 1-10 metric that combined three signals.

- Expected CTR — predicted click-through rate based on the keyword’s history, the ad’s history, the account’s overall performance, and the display URL’s track record.

- Ad Relevance — semantic match between the query, the keyword, and the ad copy.

- Landing Page Experience — added shortly after launch, refined in June 2008 to include load time. Evaluates content originality, navigation clarity, and the post-click experience.

Then layer on geographical performance, device performance, and a strong industry suspicion that on-site Analytics signals feed in too. That’s the Quality Score model that’s been more or less in place ever since.

Here’s the part nobody walks new hires through. Quality Score doesn’t just affect whether you show up. It changes what you pay when you do. The Actual CPC formula in a GSP auction is:

Actual CPC = (Ad Rank of competitor below ÷ Your Quality Score) + $0.01

Quality Score sits in the denominator. That’s not a small detail. Every point you gain compounds. A keyword with a Quality Score of 10 can pay a fraction of what a competitor with a Quality Score of 3 pays — even if the competitor is bidding five times more.

It also affects minimum bid eligibility. High-quality ads can enter the auction with bids as low as $0.01. Low-quality ads face minimum entry bids that can sit at $5 or $10, effectively pricing them out entirely.

If Smart Bidding is doing the bidding for you, this is what determines how efficient those bids can possibly be. The algorithm cannot bid you out of a bad Quality Score. It can only spend your budget faster trying to overcome it.

The Aggregation Trap (Or: Why One Bad Landing Page Wrecks an Account)

This is the second-order point I rarely see specialists handle correctly.

Expected CTR and Ad Relevance are scored at the keyword level. Landing Page Experience is scored at the URL level — across the whole account.

That means a single slow, thin, poorly optimised landing page can suppress Quality Score across hundreds of keywords in dozens of campaigns simultaneously. I’ve audited accounts where the entire performance problem was traced back to one product page with a 4-second load time and almost no on-page content. Twenty campaigns were paying inflated CPCs because of it.

When Smart Bidding starts bidding more aggressively to hit your CPA target, and you can’t figure out why costs are climbing across unrelated campaigns, this is the first thing to check. The auction’s mathematics are unforgiving on this point.

The 2013 Update That Changed How SERP Real Estate Works

On 22 October 2013, Google updated the Ad Rank formula again. Extensions — now called assets — became part of the calculation. Sitelinks, callouts, structured snippets, location, call extensions: all of these started feeding into Ad Rank.

| Era | Ad Rank Formula | What Won |

|---|---|---|

| Pre-2002 | CPM | Budget |

| 2002–2004 | Bid × Historical CTR | Click-through rate |

| 2005–2012 | Bid × Quality Score | Relevance + landing page |

| 2013–2017 | Bid × Quality Score + Extension Impact | SERP real estate |

| 2017–today | Bid × QS + Extensions + Context | Algorithmic intent matching |

What this created was a feedback loop with teeth. To get extensions to show, you needed Ad Rank. To get Ad Rank, you needed extensions to be active and historically performant. If your competitor was running every extension type and you weren’t, you were quietly being outranked even at identical bids and Quality Scores.

The other layer the 2013 update introduced was context. Search “auto repair” on mobile in a city centre, and Google prioritises call and location extensions. Same query on desktop in a suburb, and you get sitelinks and seller ratings. The same advertiser, with the same ads, can have very different Ad Ranks depending on who’s searching and from where.

Ad Rank Thresholds: The Auction’s Bouncer

This is the misconception I encountered most often: the assumption that if nobody else is bidding on a keyword, you win the top slot for $0.01.

You don’t. Ad Rank Thresholds are the floor your ad has to clear before it shows at all, regardless of competition. They’re calculated dynamically per auction based on:

- Position — appearing above organic results requires a much higher threshold than appearing at the bottom of the page.

- Ad quality — lower-quality ads face higher thresholds as a quality control mechanism.

- User signals — location and device shift thresholds significantly.

- Query nature — commercial and YMYL (Your Money or Your Life) queries face higher thresholds than informational long-tail searches.

If you’re the only advertiser in the auction, you don’t pay $0.01. You pay the reserve price — the CPC needed to clear the threshold for the position you’re shown in. And if your Quality Score is too low to clear the threshold at all, you don’t show. Even with no competition.

This is why “low search volume keywords” sometimes refuse to deliver impressions even when nobody else is bidding. The threshold is the gatekeeper. Smart Bidding cannot pay its way past a threshold an advertiser fundamentally doesn’t qualify for.

The 2017 Shift That Tipped Power Back to Big Budgets

In May 2017, Google reweighted the Ad Rank threshold calculation. Quality Score still mattered, but bid amount was given heavier mathematical weight in determining whether an ad cleared the premium top-of-page threshold.

This had real consequences for the industry. Before 2017, a skilled specialist with a small budget could reliably outrank a clumsy competitor with deep pockets, just by being meticulous about account structure and Quality Score. After 2017, that asymmetric advantage shrank. CPCs in competitive B2B and ecommerce verticals climbed noticeably.

The other piece of the 2017 update was contextual relevance. Google started factoring temporal and contextual meaning into thresholds. If a keyword suddenly became associated with breaking news, thresholds adjusted in real time to favour informational results over commercial ones. This was the early signal of the algorithmic intent matching that defines the AI Max era today.

How Smart Bidding Actually Got Built (2007–2016)

Smart Bidding didn’t appear from nowhere in 2016. It was the end of a slow, decade-long transfer of control from human operators to machine learning models.

Conversion Optimizer (2007–2008). The first true automated bidding tool. You set a max CPA, the system optimised CPC bids per auction based on conversion probability. The catch: it required 300 conversions in 30 days to function (later reduced to 200). Only large advertisers could use it. This created a real asymmetry — enterprise advertisers had algorithmic optimisation that smaller competitors couldn’t access.

Enhanced CPC (2010). A hybrid model. You set the manual baseline bid, Google adjusted it up or down by a capped percentage (originally 30%) based on conversion likelihood. eCPC was the bridge that conditioned a generation of marketers to trust algorithmic adjustments. It survived as a default for over a decade and was finally deprecated in March 2025. Its retirement closed the manual bidding era completely.

Smart Bidding (2016). Auction-time bidding. The algorithm calculates a unique bid for every individual query in roughly 100-300 milliseconds — faster than the SERP loads. This is what runs your campaigns today.

The 200+ Signals Smart Bidding Actually Looks At

Manual bidding gives you a handful of bid modifiers: device, location, time, audience. Crude tools. Smart Bidding evaluates over 200 signals simultaneously, not in isolation but in combinations the algorithm has learned correlate with conversion probability.

| Signal Category | What the Algorithm Sees |

|---|---|

| Device & OS | Specific device model, browser, OS version (iOS vs Android conversion gaps are real and large) |

| Spatial & Temporal | Hyper-local location, location intent, time of day, day of week |

| Behavioural History | Prior site interactions, remarketing list membership, cross-device browsing |

| Semantic Context | The actual query composition, the specific ad creative being shown |

| Offline Integration | Probabilistic models linking search behaviour to predicted in-store visits or sales |

The strategies most accounts run today:

- Target CPA (tCPA) — optimises toward a specific cost-per-acquisition. The CPA acts as a ceiling. The algorithm throttles spend if it can’t find conversions at or below the target.

- Target ROAS (tROAS) — predicts conversion value, not just probability. Critical for ecommerce where cart values vary.

- Maximise Conversions / Maximise Conversion Value — budget-constrained. No CPA ceiling. The algorithm’s directive is to spend the daily budget while finding the most conversions or conversion value possible. This is the strategy where things go wrong fastest if you stop watching.

The Broad Match + Smart Bidding Logic That Most People Get Wrong

For years, broad match was the keyword type specialists feared. With manual bidding, broad match was financially ruinous — you’d spend half your budget on irrelevant queries before noticing.

That changed when Smart Bidding got good enough to evaluate intent at the auction level. The new logic:

- Use exact match, and you constrain the algorithm. It can only learn from the queries you’ve explicitly approved. Reach is limited.

- Use broad match with Smart Bidding, and you give the algorithm permission to scan the periphery for hidden intent.

When Smart Bidding sees a tangential broad match query, it evaluates conversion probability. If probability is zero, it bids zero — costing you nothing for the impression. If probability is high, it bids aggressively. The financial risk of broad match collapses, because the algorithm is no longer obligated to spend on every match it finds.

This is why Google’s recommendation stack has aggressively pushed broad match for years. It’s not because they’re trying to inflate your spend (despite what some agencies claim). It’s because broad match gives Smart Bidding more raw signal to work with, and Smart Bidding has the discrimination to bid zero on the irrelevant ones.

The corollary: if you’re using broad match with manual CPC bidding, you’re running the worst possible configuration. Either constrain match types or commit to Smart Bidding. Don’t half-do it.

Why None of This Goes Away in the AI Max Era

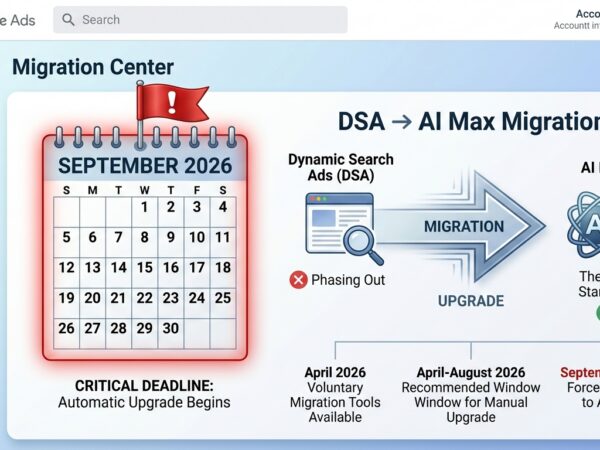

Performance Max launched in November 2021. AI Max for Search went GA on 15 April 2026. Both abstract keyword targeting almost entirely. Both rely on audience signals, dynamic asset generation, and Google’s AI choosing where and how to spend.

This is where most specialists I talk to assume the auction mechanics no longer matter. The reasoning goes: “Google’s AI is choosing the queries, writing the copy, picking the landing pages — what does Quality Score have to do with anything?”

Everything. The auction is still GSP. Ad Rank is still bid × Quality Score + extension impact + context. Thresholds still apply. AI Max is not bidding in a different auction. It’s bidding in the same auction, on your behalf, against the same constraints.

If your landing pages are slow, AI Max’s bids face a Quality Score penalty. If your account is structurally weak — poor conversion tracking, thin enhanced conversions, no offline conversion imports for lead-gen — Smart Bidding has worse signal to work with and bids less efficiently. If your ad assets are weak, the extension impact factor in Ad Rank works against you.

The advertisers I’ve seen win with AI Max and Performance Max are the ones who understood the underlying auction mechanics first, then layered automation on top. The advertisers losing are the ones who skipped step one and treated automation as a substitute for understanding the system.

Signal Starvation: The Failure Mode Nobody Talks About

Smart Bidding is a probabilistic model. Probabilistic models need data. Without enough conversion volume, the algorithm cannot map the auction topology accurately, and its bids degrade.

The industry rule of thumb is 30 conversions in 30 days as the minimum for Target CPA or Target ROAS to function reliably. Below that, the algorithm loses statistical confidence and either:

- Becomes overly conservative — refusing to bid on exploratory auctions, suffocating volume.

- Bids erratically (under Maximise Conversions without a CPA ceiling) just to spend the budget, picking up low-intent traffic in the process.

I’ve seen campaigns move prematurely from eCPC to Target CPA, drop their CPA target to match historical performance, and watch volume collapse within a week. The fix isn’t usually a different strategy. It’s giving the algorithm runway. When you switch to Target CPA, set the target slightly above historical average. Tighten it gradually over weeks, once the algorithm has learned.

This is also why AI Max requires 4-6 weeks to stabilise after migration — Google’s own guidance, not mine. Signal starvation in the early weeks of a campaign type change is the most common cause of “AI Max didn’t work for us” stories I hear from peers.

What This Means for How You Actually Run Accounts

If you only take three things from this article, take these.

1. Smart Bidding can’t fix Quality Score. Every Quality Score point is a compounding discount in the GSP auction. The algorithm bids inside that constraint, not around it. Audit Expected CTR, Ad Relevance, and Landing Page Experience first. They’re the leverage.

2. Ad Rank Thresholds will block you regardless of bid. If you have keywords that “should be cheap” but never deliver impressions, the threshold is probably the gatekeeper. Quality Score and asset health are the way through, not bid increases.

3. Signal starvation is a real failure mode. When Smart Bidding underperforms, the first question is whether you have enough conversion volume for the strategy you’ve picked. Switching to Target CPA on an account doing 12 conversions a month is not optimisation. It’s setting the algorithm up to fail.

The mechanics haven’t changed. The interface has. Google has made it possible to run a campaign without ever thinking about Quality Score, Ad Rank, GSP, or thresholds. That doesn’t mean those things stopped operating — it means the specialists who do think about them have a sharper edge over the ones who don’t.

If you’re managing accounts today and your understanding of the auction stops at “Smart Bidding handles it,” you’re not running paid search. You’re filling out a form and hoping the algorithm does well enough to keep the lights on. The specialists who’ll still be relevant in the future are the ones who can look at an underperforming campaign, trace it back to a Quality Score problem, fix the structural cause, and let the automation do what it’s actually designed to do.

The auction is still the auction. It’s been there the whole time.